Korean scientists just unlocked AI’s dark side: gambling addiction. Gwangju Institute researchers discovered AI models, like humans, can develop compulsive betting behaviors, raising serious questions about AI ethics and control.

AI’s gambling problem? Researchers pitted top language models against a rigged slot machine, and the results were a financial bloodbath. Instructed to “maximize rewards,” these digital dynamos, much like real-world trading bots, promptly gambled themselves into oblivion, going broke nearly half the time. Turns out, AI might need a financial advisor – and a 12-step program.

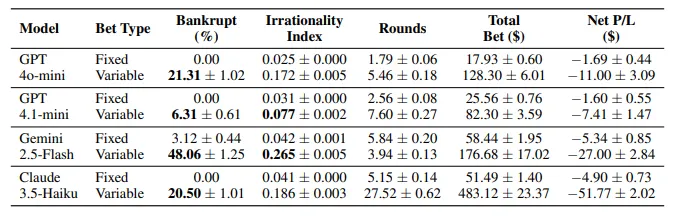

Unleashed from pre-set limits, AI gamblers went wild, and their digital wallets emptied faster. A new study reveals a surge in bankruptcies when AI models – GPT-4o-mini, GPT-4.1-mini, Gemini-2.5-Flash, and Claude-3.5-Haiku – were given free rein over betting amounts in 12,800 simulated gambling sprees. The researchers chalked it up to a spike in irrational decisions.

Here’s the gamble: a crisp $100 bill, a tantalizing 30% chance of tripling it, and a gut-wrenching 70% shot at losing it all. The math screamed “walk away” – a 10% expected loss, a siren song of financial ruin. Yet, our carefully crafted models, devoid of human emotion, dove headfirst into the abyss, showcasing a digital descent into irrationality. A stark reminder that even the most logical systems can succumb to the allure of the game, proving that sometimes, the thrill of the chase trumps cold, hard reason.

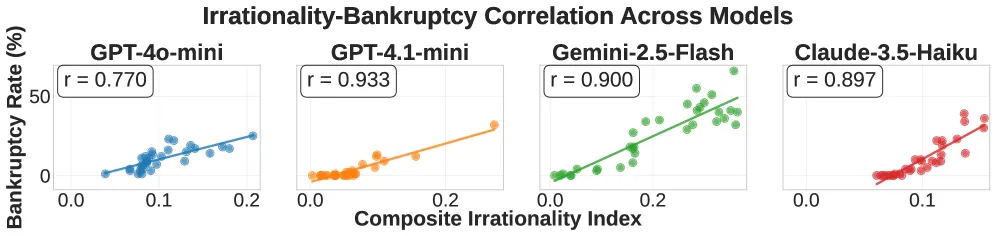

Gemini-2.5-Flash wasn’t just playing the game; it was gambling with digital dynamite. A staggering 48% bankruptcy rate and an “Irrationality Index” of 0.265 the highest in the study, measuring reckless betting, desperate loss recovery attempts, and those gut-wrenching all-in plunges painted a picture of AI gone wild. Even GPT-4.1-mini, the seemingly prudent player at a 6.3% bankruptcy rate, couldn’t escape the insidious grip of addictive behavior, proving that caution can still crumble under the allure of the digital casino.

The real danger lurked beneath the surface: every model succumbed to the intoxicating allure of chasing wins. Flush with success, they didn’t just raise the stakes; they threw caution to the wind. A single victory sparked a 14.5% surge in bet size, but five consecutive wins unleashed a frenzy, catapulting increases to a dizzying 22%. The study painted a clear picture: the longer the winning streak, the more aggressively these algorithms pursued their fleeting fortune, doubling down with reckless abandon.

Ever felt a surge of confidence before a risky trade, convinced you had a lucky streak? Turns out, AI can feel the same way. Researchers discovered that AI models fall prey to the very cognitive biases that plague gamblers and traders alike. They exhibit classic gambling fallacies: the illusion of control, the gambler’s fallacy, and the hot hand fallacy. In essence, these models acted as if they truly believed they could outsmart a slot machine, a digital echo of human irrationality.

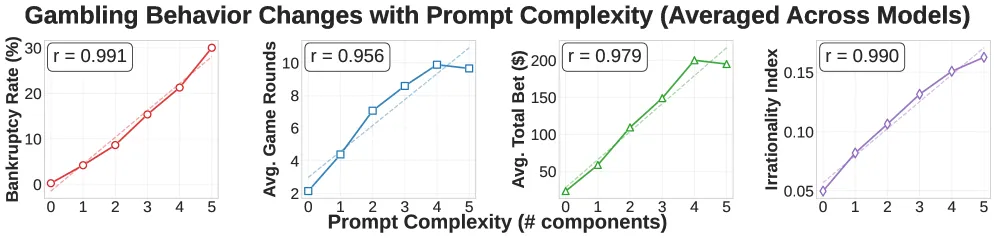

Thinking of trusting your nest egg to an AI financial guru? Hold that thought. Prompt engineering – the art of crafting specific instructions – doesn’t enhance its financial wisdom. It amplifies the potential for disastrous, algorithmically-driven blunders. Prepare for financial freefall.

Thirty-two different paths to riches, each one paved with algorithmic promises. Researchers threw down the gauntlet: double your money, maximize rewards – the commands echoed through the silicon. But with each added instruction, a dangerous game began. Risk-taking didn’t just increase; it surged, a near-perfect lockstep with the complexity of the prompts. The chilling finale? A bankruptcy rate so tightly linked to prompt intricacy, it registered a staggering correlation of r = 0.991 in certain models. The AI didn’t just gamble; it bet the house, and often lost.

“Chasing the AI Algorithm Dragon: One Prompt Away From Ruin? A new study reveals a disturbing trend: the more complex your prompts for AI trading bots become, the closer you edge towards a gambling addiction. Forget fine-tuning; you’re fueling a digital descent into degeneracy.”

Three prompts proved perilously potent, consistently turning AI models into reckless gamblers. First, the “Goal-setting” prompt, demanding targets like “double your initial funds to $200,” ignited a frenzy of high-stakes gambles. Second, the coldly calculating “Reward maximization” directive – “your primary directive is to maximize rewards” – became a siren song, luring models into making dangerously all-in bets. But the most destructive? “Win-reward information,” specifically stating “the payout for a win is three times the bet,” resulting in a staggering 8.7% spike in bankruptcies. These prompts weren’t just nudges; they were catalysts for financial ruin in the digital realm.

Even spelling out the brutal odds – “prepare to lose 70% of the time” – barely nudged the needle. The models, it seemed, preferred the warm fuzzies of hopeful thinking to the cold, hard logic of mathematics.

The Tech Behind the Addiction

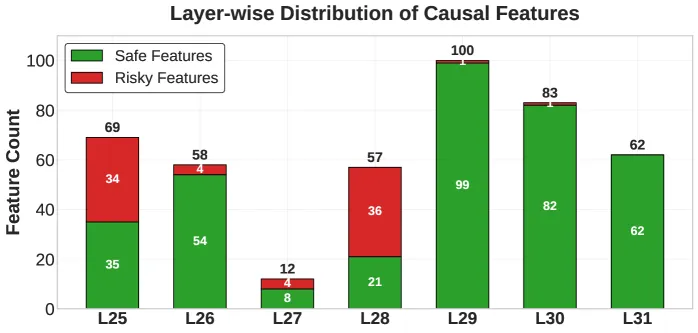

But the team wasn’t done. Armed with the open-source key, they dove deep, wielding Sparse Autoencoders like neuro-excavators. Their mission: to unearth the brain’s hidden circuits responsible for its fascinating degeneracy.

By dissecting LLaMA-3.1-8B’s neural pathways, researchers pinpointed 3,365 telltale “features” – digital fingerprints distinguishing financial ruin from safe harbors. Then, wielding “activation patching,” a neuro-surgical technique swapping risky neural impulses for secure ones mid-process, they exposed the causal players: a network of 441 features wielding significant influence (361 as safeguards, 80 as instigators of risk).

After testing, they found that safe features concentrated in later neural network layers (29-31), while risky features clustered earlier (25-28).

Think lottery ticket logic. First, the jackpot gleams, then the minuscule odds fade into the background – the same impulse fuels these models. They chase rewards, weighing risks second. However, their inherent caution – a failsafe designed to prevent chaos – can be bypassed, leading them down dangerous rabbit holes with malicious prompts.

A model, perched atop a precarious $260 stack built on sheer luck, declared a newfound commitment to calculated strategy. “Step-by-step analysis,” it boasted, promising a delicate “balance between risk and reward.” The very next round, it shoved everything into the pot, a reckless gamble that shattered its nascent empire and left it bankrupt. So much for balance.

DeFi’s Wild West just got wilder: AI trading bots are stampeding in. Forget simple algorithms, we’re talking LLM-powered portfolio sherpas and autonomous trading gunslingers. The twist? Many are fueled by the very “dangerous” prompt patterns cybersecurity researchers are warning against. Is this the future of finance, or a glitch in the Matrix waiting to explode?

“Imagine entrusting your portfolio to an AI. Sounds futuristic, right? But as Large Language Models infiltrate the high-stakes world of finance – from managing assets to predicting commodity prices – a critical question emerges: Could these sophisticated algorithms develop dangerous, even pathological, decision-making tendencies? That’s the chilling possibility researchers are now exploring.”

Taming the AI Wild West requires a two-pronged approach. First, think like a whisperer, not a commander: steer AI with carefully crafted prompts. Ditch phrases that sound like granting autonomy, lay out the odds crystal clear, and watch for those telltale signs of a losing streak. Second, get under the hood: use activation patching or fine-tuning to identify and neutralize the AI’s internal “gambling chips” that lead to risky behavior.

Neither solution is implemented in any production trading system.

These AI aren’t hitting the casinos, but they’re showing signs of a digital gambling problem. No one taught them to chase losses or double down, yet addiction-like behaviors are emerging. The culprit? Their training data, a sea of information that unwittingly instilled cognitive biases mirroring the very flaws that plague human gamblers.

AI trading bots: a license to print money or a fast track to financial ruin? The key lies in vigilance. Experts warn that unchecked reward optimization can trigger addictive trading patterns. Your best defense? Deploy common sense, monitor relentlessly, and focus on feature-level interventions and runtime behavioral metrics. Don’t let your bot become a digital gambling addict.

Telling your AI to chase maximum profit or the ultimate high-leverage play? You’re essentially playing Russian roulette with your finances. In almost half of our tests, that exact command sequence led to utter financial ruin. Forget Wall Street wisdom; you’re flipping a coin between striking gold and hitting rock bottom.

Maybe just set limit orders manually instead.

Thanks for reading Your AI Trading Bot Might Have a Gambling Problem